This post has been adapted from a talk given at ITx.

Hello everyone. Today I want to talk to you about the future. I want to take you on a journey into the years ahead.

Who am I? I’m Sam Jarman a software developer at Sailthru, a marketing technology company. I’m a marketing fanatic, actor, runner and future thinking. I spend far too much time reading and learning about current and upcoming developments in technology, science and ethics. This post shares my thoughts, conclusions and insights with you.

I want to tell you about three things, the chatty, the smart the small. These stories are predictions of the future, ranging from 10 years into the future, to 20, to 50.

The future is always a product of its past. So to predict the future, we have no choice but to look at what we have now, and how far we’ve come. For example, what if I told you just 10 years ago (2006) that you could type into Google “tickets to Finding Dory” you could book right then and there simply by touching a button on your phone? If I told you that we’d be able to land rockets on barges with no human intervention, would you think I was crazy? How about that you send your heartbeat to your loved one straight from your wrist? How about that we have apps for everything, from tracking our steps to ordering a personal driver on demand? Some of things I’ll talk about in this post will seem totally crazy, but so did these ideas just 10 years ago.

So let’s not be shy and just think crazy.

The Chatty

The way we communicate is set to change in the future. Big things are coming for social media and communications

Think about how you communicate now. Do you tend to have long chats, or share stuff in the moment? Do you tend you meet up with people and talk, or do you send them stickers and emojis in a chat app?

We communicate now with Social networks. Since the launch of Facebook and Twitter in the mid 2000s we’ve seen a massive change in how people chat and interact online.

These are just some of the social networks that we’re on today, and I’d argue you see a few you’re a member of. Perhaps you use Facebook or Twitter to share updates. Maybe you’re trying to to find that special someone on Tinder. Maybe you like to share photos on instagram or, um, more secret photos on snapchat, or maybe you like share you opinion on Medium. Maybe it’s the lesser known ones like Musical.ly for sharing a duet, or Slack for communicating with colleagues. Whatever your poison, this is what the world is using is using now. But how are we using these networks? What devices are we using?

Let’s talk hardware. The very devices we use to input text and view media on social networks.

Right now, smartphones are the everywhere… and smart watches such as Apple Watch or Android Wear are starting to become more and more common.

EEG headsets are hardware that read brain waves and when combined with software can detect detect patterns. Right now it’s pretty rudimentary, at most single commands can be issued, go up, go down, go left go right… but soon we’ll be able to get full text output from our brain waves. However, people are excited about this right now. Imagine playing a VR game without having to hold a controller — or being able to fly a drone just by thinking about where it should go. How about moving your wheelchair? The possibilities are endless. These headsets range from $100 for a lower power headset, through to $800 for the Emotiv headset seen above. Just a year ago those prices were over double that. EEG headsets will be the future of input. And they’re only getting cheaper.

So that’s getting input for the user. Let’s think about display.

Google Glass, was incredible, but arguably before it’s time for consumer consumption, and is sadly no longer for sale. The presence of a camera and voice activation was off-putting for many, but Glass hasn’t completely died. Last year, It quietly relaunched as Glass at Work, and is being used for the manufacturing, medical and entertainment indstrusties. However the idea of a lightweight and capable display in our sights is an interesting one. No longer will we have to look at our watches or pull out our phones.

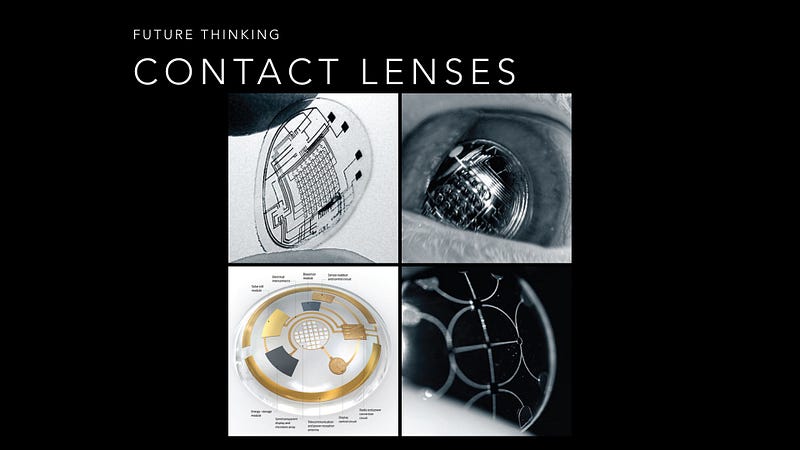

Looking even further ahead, heads up displays inside contact lenses are coming.

The current research can produce displays that are about 8 pixels, but it’s only a matter of time before it they get better. Imagine the day where we get rich, augmented reality content right inside our eye.

Heads up displays will be the way we see content in the future. No longer will we have to look at our watches or take out our phones.

So, what would we get if we were to combine these two pieces of hardware for the use of social networks? Social networks in the future will use the latest hardware to increase, enhance and later perfect content creation and consumption. Let’s talk about two observations about social media and how we use it.

Firstly, young people are glued to their phones, constantly sharing media between them, whether it’s IMs, photos, videos, audio — whatever. The funnel of content is vast and from many sources.

Second, there’s a lot of noise.

Think about what we saw with TV. We changed model of many channels, such as Cable Boxes with 300 channels and nothing on, to Netflix, with only shows you want to watch, right now. Social will go this way too, where content you might like is promoted. Content that is just rubbish will sink to the bottom. Facebook and now Instagram has started to do this, and we’ll see much more soon.

So. Social Media. On demand. For you. By your peers. Just with your thoughts.

It’s what I like to call a NeuroSocial network. Neuro, coming from the Greek root word for Nerve. Just like a nervous system, you will be able to tune in and out of social channels as you so desire. With a EEG headsets and a heads up display, you can just think about what you want to see, and you will see it. Think about what you want to share, and you will share it. In a sense, what I’m describing is Digital Telepathy.

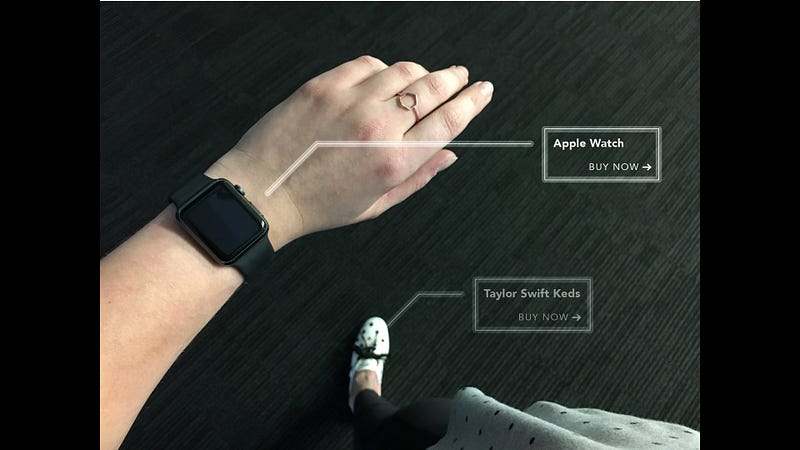

On NeuroSocial networks everyone, friends, families, brands will be able to constantly publish their day’s activities to their feeds. Friends will be able to both pull in content and have content pushed to them based on their interests. Say your friends just ran a marathon you’d be shown video of them crossing the finish line. Your friend just got married? See the point of view video of them walk back down the aisle. Say if that techie you follow just published some a new tutorial, you’d be shown the code right in your eye, and be able to look at your GitHub to see where you could apply it. These are your best moments shared in the best possible way. And of course, with all great content comes the opportunity for some creative advertising to slip in.

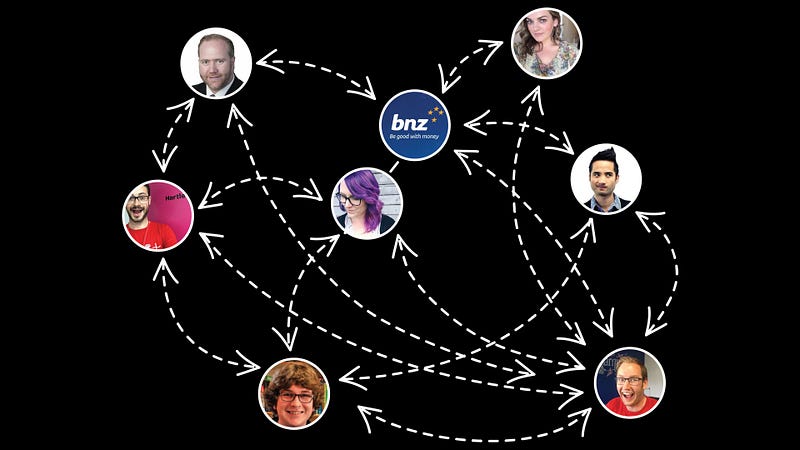

Being me, I think about advertising a lot. With NeuroSocial networks, I believe we’ll see the final form of social influencers, or what I call Sponsored Lives.

This is Felix Kjellberg, a youtube user known by his handle, Pewdiepie. Pewdiepie makes videos of him playing computer games. He’s somewhat popular because of it, and last year alone he made an estimated six million USD. Being a “youtuber” is becoming a viable career.With the lifestyle being described from anywhere like “being in band” through to running your own team of content producers.

Right now, it’s trivial to hire a “famous” instagrammer to post your product to their thousands or even millions of followers. Users of Musically, Facebook, Vine, Tumblr, and Twitter do the same. Celebrity endorsements are on their way out, and marketers are finding the second class, online celebrities much more valuable for promotion. These people hold the attention of their audience, and with authentic product placement are able to have great influence. This channel for brands is becoming so much viable for what social media marketing legend

would call “day trading attention”.

As a social media influencer, or a person with a sponsored life, being able to look down at your sponsored watch to your sponsored shoes, all while sharing them in the context of your everyday life would be the holy grail. Not just for you, but for the brands paying you to do so. Gone are the days of Corona filming some surfers, instead we’ll see people sharing pictures of having a cold one [beer] on a nice day.

Content advertising and native advertising is on the rise and a constantly connected population will help both brands and influencers living sponsored lives. We’ll see targeting advertising and product placement so good it just seems like friends telling you what’s in and what’s not. This is the future of fashion.

So that’s what might happen with social media, comms, fashion and advertising. I believe we’ll see this tech in as little as the next 10 years. It’s exciting to think about it. A land of connected humans with digital telepathy, NeuroSocial networks and information flowing freely between those who want to see it. Those are the chatty.

Algorithms are the heart of this technology, and that brings me to my next topic. The Smart.

Artificial Intelligence will change the way we think about almost everything. Computers are now able to learn behaviour, make decisions and deduce and reason with incredible precision.

So let’s have a think about some of the cool stuff going on in AI now.

This is Google’s self driving car. Somewhat hideous, but then again I guess no one is going to see you drive it. It works. It can drive itself. Probably better than you. How about smaller?

This is Amazon Prime Air… announced in 2013 as a prototype, today it's looking refined and ready for use. This might sound ridiculous, but the idea is to deliver (small) packages in 30 minutes. Need a cup of sugar? Run out of beer? Need a flash drive? Perhaps you need urgent medical supplies or attention? Drone technology is *cough* taking off in big ways, and I’m excited to see how this proceeds.

However, some people have their concerns with this sort of technology.

When people think of AI they think of Robots, Terminator, Death and Destruction. It’s scary. It’s scary to think that we’re able to build robots that can kill humans. End a life. End all of our lives. However, rest assured….We’re not there yet.

er… definitely not ready yet. Damn bananas.

A lot of people get worried about robots killing people, going rouge, that sort of thing. Ultimately I think one of the most important steps we can society as a society with Robots is:

DON’T GIVE ROBOTS GUNS.

It’s not hard! It’s best to always have a human in control. Automated systems are fine, but ultimately it should be a human who should make that call to end a life of another, for whatever reason.

For example, unmanned aerial vehicles, UAVS also known as “drones” have revolution warfare, security, surveillance and intelligence. Being unmanned, the risk of being shot down is irrelevant, and being smaller, the precision is higher.

At the heart of the 2004 movie iRobot, starring Will Smith was the the notion of Asimov’s Laws of Robotics. Set in 2035, the film explored the notion of robots being servants to humans. A lot of people quote these laws and say “everything will be fine” when it comes to rogue AI.

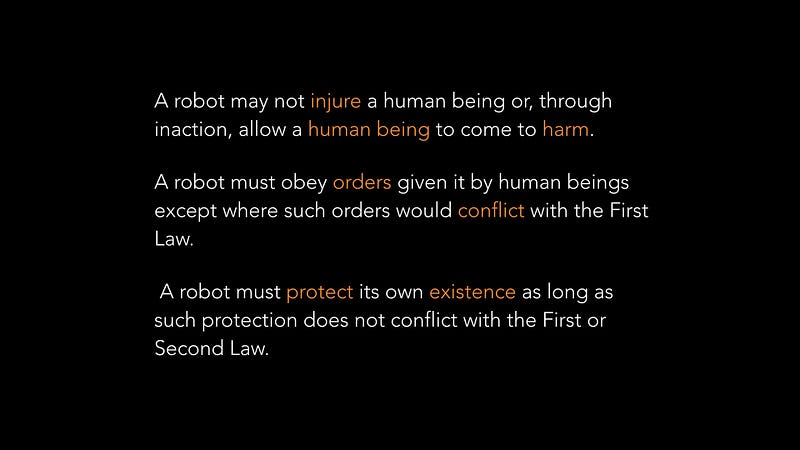

These are the laws that science fiction writer Isaac Asimov came up with to govern robots. In short, a robot may not harm a human, it must do what it’s told, and it must stay alive, put itself behind us. They’re simple laws. Elegant, and intuitive. They’d seem to work. The problem with these laws is that if you give them to a programer the first thing they’ll do is ask you to define some things.

Human. Harm. Robot. Existence. Protect.

These are hard things to define. What is a human? What about pets, what about livestock? I don’t want my pet dog or farm of cows to die. What is conflict? What is existence? Can I robot save itself as long as it’s main hardware stays in tact? Can it save one of your lives if it means being in a coma. Let’s consider an example of harm.

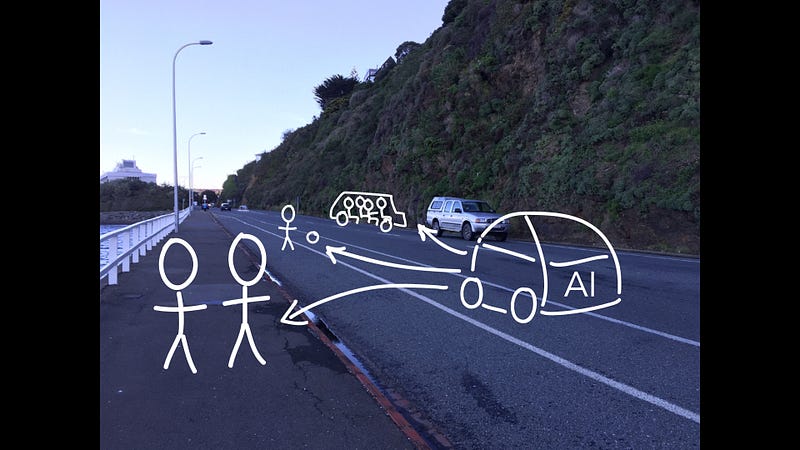

This is Evans Bay Parade in Wellington, New Zealand. It’s your standard costal road. Imagine a car moving forward. To the right, a family of four, to the left, an elderly couple. Suddenly a child trying playing Pokémon Go runs out to try catch a Charmander.

What would an AI driver do? This might sound similar to as Trolley Problem which is a 1960’s thought experiment in Ethics whereby a train is heading for five people. You can pull the lever and make it switch tracks, but the other track has one person. What do you choose? Death through action or Death through inaction?

At some point a programmer will have to write code so that an AI could determine the outcome of this situation. The US Military has ways of dealing with this, evaluating the value of life in a wartime scenario… but is life that black and white — can you put numbers on that, really? Are we comfortable with that?

A lot to think about. Are AI just heartless machines? Or are they something we could relate to on a deeper level?

One approach to this unfriendly AI thought is to make it’s hardware manifestations, such as robots look like us. A friendly smile, some bright eyes, warm hands or a cute laugh. There is a bunch of research looking in to how humans and humanoid robots will interact, and it’s not hard to imagine a future where children will be able interact with a robot no different to one of their stuffed toys or the family pets. But does it help for robots to look humanoid? Is that the most optimal design for their tasks?

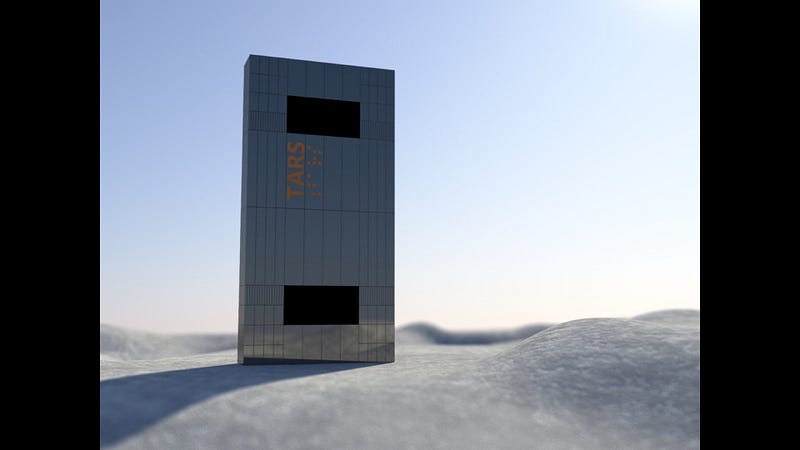

This is TARS from the film Interstellar — With the ability to transform in many different ways. He can extend arms and even more so for finer movements. In the film, He is extremely well suited to his role. There are many non-humanoid robots in use today such as those in car factories or other manufacturing plants. TARS, like most future robots, has a personality — so does this affect our ability to interact with him? You might have noticed I’ve been describing TARS as a male, using the terms “him” and “he”, rather than it… so maybe the humanoid form isn’t required for suitable interaction?

TARS was a valuable member of the crew, able to crunch data that no human could, but he not a replacement for anyone. But what happens when robots do start to replace human roles?

Perhaps killer robots aren’t the worst thing that is coming… but their place in society being a threat in general?

Right now the most common job in the middle states of the US is truck driving. However, if we made these drive themselves, shipping costs could become so much smaller. We could drive them 24/7, remove the sleeper cab, use more optimal routes and optimise the use of fuel. However what would the thousands of men and women now unemployed do to provide their families? What productive place in society can we find for them?

Many more jobs stand to be lost by advancements in technology and AI — and we must be careful that we have replacement jobs waiting for our fellow humans who are now out of work.

Assuming tech trends continue as they do — computer scientists should have ongoing discussions with those who experts in philosophy, economics, psychology and ethics in order to make the future better for all of us.

Artificial Intelligence will change the way we think about almost everything. Ultimately we must be very careful with it. The next 20 years will bring exciting changes and challenges in the space, not just tech, but legal, ethical and economical challenges.

Finally my last topic is that of nanotechnology. The Small.

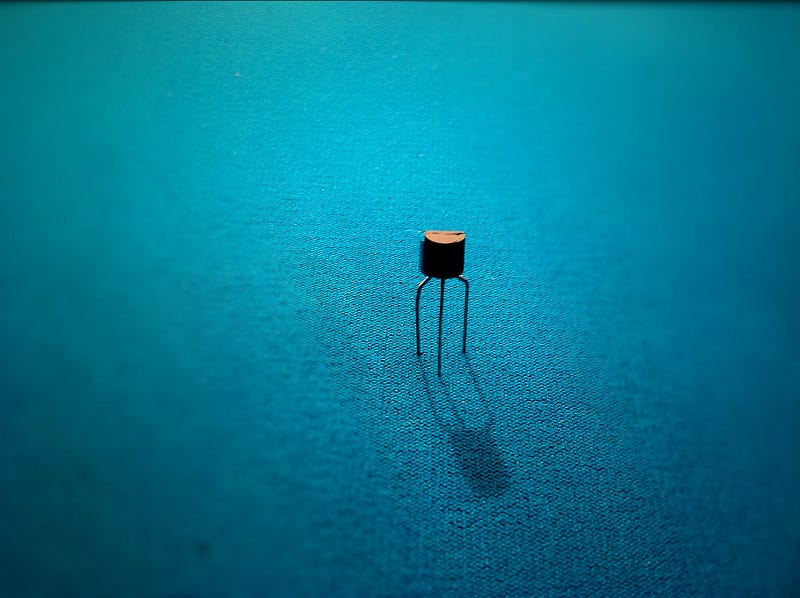

This is a transistor. They’re largely used as switches. They have three legs, power in, and possible power out, presence of power makes the on the middle makes it go out the third. They can be used to store state. A bunch of them means memory, and a bunch more means processing. Since about the 1970’s we’ve been able to double the amount of transistors in a microprocessor every 18 months. This means more and more data processing year on year. Some of you might know this as Moore’s Law, invented by Intel co-founder Gordon Moore. These advances are achieved by shrinking transistors with new techniques. This macro photo was taken of a transistor the size of a grain of rice. The ones in your phone are about 1000 times smaller than the width of your hair.

It’s starting to become obvious that Moore’s Law will run out one day. And for that, we’ll have to look to biology and chemistry to see what we can achieve. Perhaps nanotechnology will be more on the molecular level?

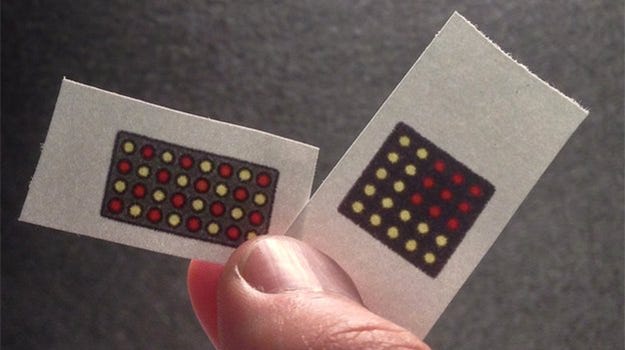

We’re currently seeing a revolution in paper tests.

With just a strip of special paper and your urine, we’re now able to detect the likes of Ebola, Malaria, Diabetes and much more. These tests are instant and don’t take weeks for lab results to come back. They’re even being used to help solve the epidemic of fake drugs sold into 3rd world countries by corrupt charities. This is powerful stuff.

In a world of Fitbits and fitness tracking, It’s easy to think forward and see a future of Smart Loos, where our home bathrooms will be able monitor our bodies for signs of health.

Combining the research like smart paper tests and other developments, these could sample our urine for all sorts of medical tests and alert us to risks. Combining this with the ever increasing internet of things movement, Smart Loos could inform our doctors, or even our shopping lists… all through the power of biotechnology.

This is a blood vessel. Sangeeta Bhatia, a physics bio-engineer researcher at MIT leads a team that has come up with a way to use tiny molecules to detect cancer. Simply put, they’re able to leak certain particles from the blood, into concert tumors, react with the enzymes produced by the tumour, and then out into the kidney into your urine. The resulting molecule is used detect cancer. Awesome. There’s a new TED talk on biology breakthroughs every week — and I’m excited to see what’s going to come next. Some of it might come close to home!

This is IBM BlueFern, a super computer that lives at the University of Canterbury (where I studied!) in Christchurch, New Zealand They have a project there to simulate a tiny section of your arm. Every molecule travelling in and out of every cell. While it’s just a tiny piece at the moment, it’s easy to imagine this being extended to whole organs or your whole body and brain. We could run human and animal free drug experiments on a range of generated bodies in a matter of days. Low cost. No risk. Faster iterations. This sort of biotech thinking is where our heads must be at. These are what big computers can do… but what about the smaller ones?

While there is some thinking that Moore’s Law will fail to continue one day, we know that transistors are getting smaller. It has been proven to be possible to get transistors down to 5nm or even smaller. It’s even possible to build transistors out of just a few molecules. So let’s postulate about what we could do with such small devices.

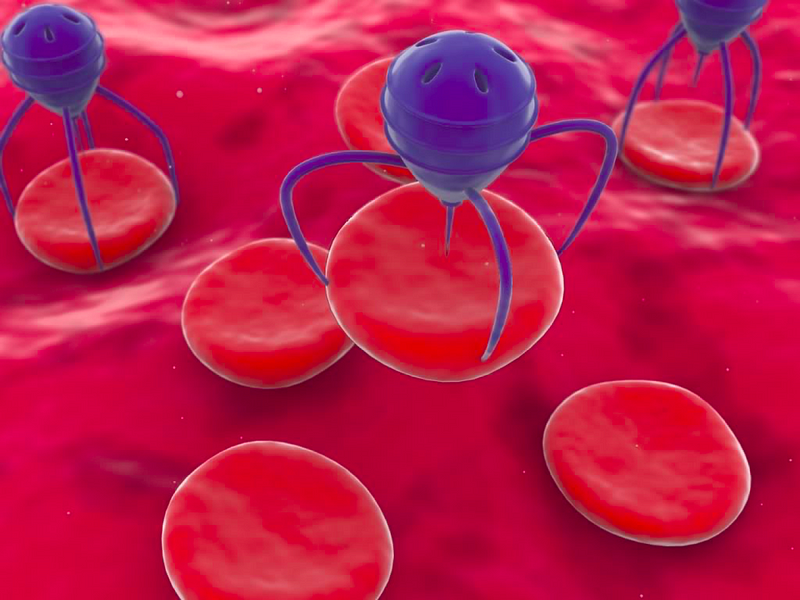

For me, this means nanobots. The possibilities with nanobots are amazing. Imagine a walking or flying device that could be remotely controlled that could remove, convert, add material from anywhere. This could be metal, organic material or even plastics. These critters, not one by one, but by the few billion is how I see the world changing. I want to share some exciting use cases for this technology. I’ll start with a simple use case.

Grooming. Do you ever wake up, feeling a bit less than fresh, in the need for a shave, maybe your fingernails are too long, or that donut from yesterday is weighing on your mind.

Imagine before you go to sleep, you set your alarm and then open your app for Nano-Grooming. You select nails, hair, beard, unwanted fat and then suddenly a bunch of critters emerge from a container and go to work on your body. By the time you wake up, you’re looking sharp and ready to start the day. Fresh breath and all.

Maybe this could be used for more medicinal purposes? Finding and treating cancer or other diseases? Removal of tumors or cysts? Removal of wrinkles, fat or sugar? Removal of dead skin or infection? How about treating the 3rd degree burns of our bravest firefighters?

Milford Sound, New Zealand

How about the environment? Imagine a world covered in an invisible blanket of nanobots. In the oceans, the skies, the forest and the trees. We could remove CO2, effectively reversing climate change. We could fix the ozone layer. We could clean the oceans of plastics, spilled oil and other rubbish. We could rescue wrecks and recycle lost materials. We could even remove Didymo, a threatening weed from New Zealand’s creeks. We could keep the Milford Sound looking…beautiful.

Without going into too much detail, the world is a troubled place, with conflict brewing more often than we like.

Can we use nanotechnology to disarm those who mean us harm? Anything from disarming a car being driven by a drunk driver through to dissolving the firing mechanism of a nuclear warhead.

Can we use this technology to build bridges of resolution between areas of conflict by increasing areas compassion, understanding and awareness? Perhaps they could sit in your ear and perfectly translate conversations between foreign leaders? A lot of technology has been developed during wartime, and modern technology such as encryption, touch screens, transport and more was paid for by military funding. Perhaps nanobots will see their fruition in this way?

Nanobots could save lives in so many ways. They could be used in scenarios from the home, the street, the operating theatre, the battlefield to assisting mother nature itself. There are even more way this technology could be used that we can’t even think of yet.

And that’s The Small. A vision of the future where there a trillions upon trillions of tiny nanobots floating around us in a cloud, keeping us, our families, our friends, our environment safe. Safe from harm, from disease and from ourselves. I think we’ll see this in about 50 years time, perhaps even sooner.

So that’s a view of the future. But it might not be the chatty, the smart or the small, it might’ve be something we can’t even see or even begin to predict now.

Predictions of the the future turn out almost never to be true, but whatever that next thing is, it is right around the corner.

No matter when you’re reading this post or others like it, that next thing will change the world. And it’ll arrive so fast, faster than any of us think. It won’t be shocking or startling….we humans will adapt to it, just like we always do and life will get a little better, just like it always has.

And whatever that next thing is, I really hope I’m there with all of you to see it.

I’m Sam Jarman, thank you very much for reading this piece. It means a lot to me.